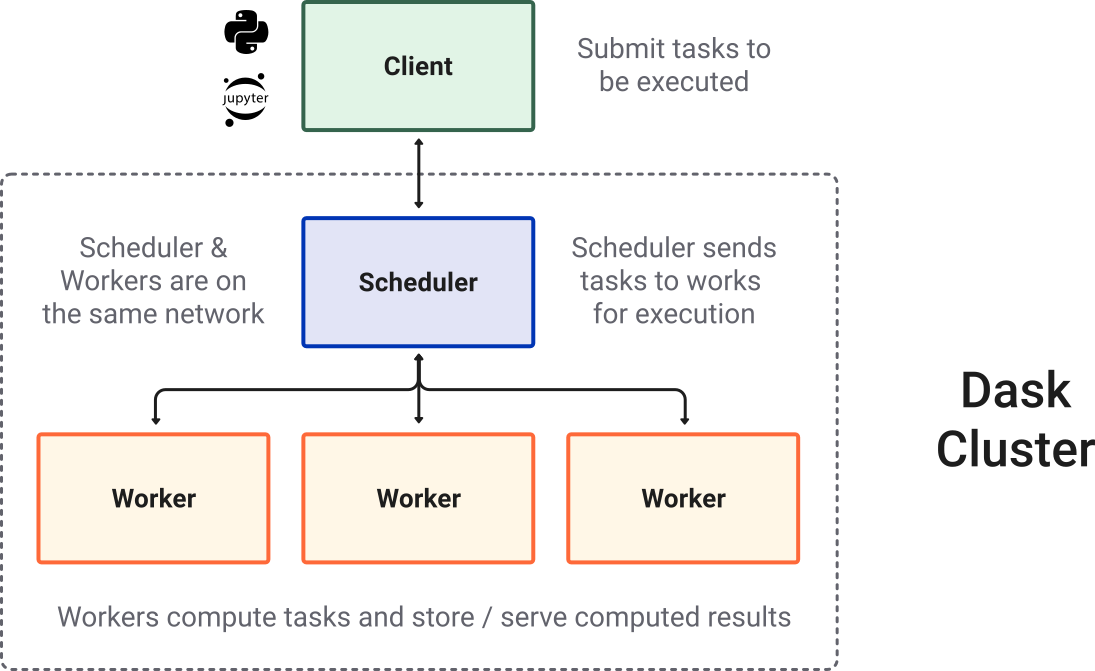

Run Heavy Prefect Workflows at Lightning Speed with Dask | by Richard Pelgrim | Towards Data Science

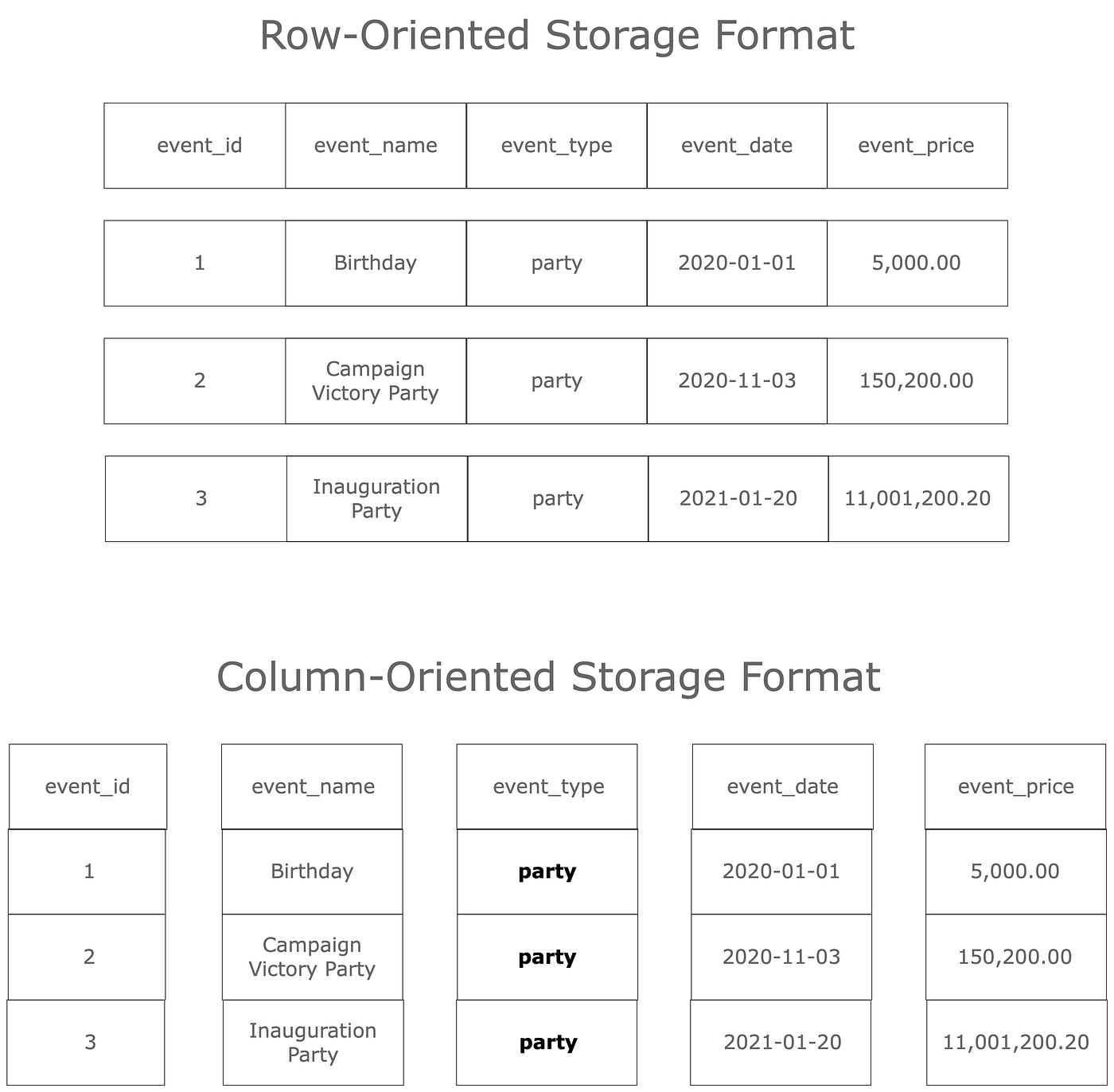

Parquet reading/writing is non-deterministic for multi-index frames · Issue #6484 · dask/dask · GitHub

Python and Parquet performance optimization using Pandas, PySpark, PyArrow, Dask, fastparquet and AWS S3 | Data Syndrome Blog

python - Using set_index() on a Dask Dataframe and writing to parquet causes memory explosion - Stack Overflow

4 Ways to Write Data To Parquet With Python: A Comparison | by Antonello Benedetto | Towards Data Science

Converting Huge CSV Files to Parquet with Dask, DuckDB, Polars, Pandas. | by Mariusz Kujawski | Medium